AI Experience Design: Redefining Interaction in the Intelligent Era

The Evolving Role of AI in User Experience

👋 Hi, I’m Andre and welcome to my weekly newsletter, Data-driven VC. Every Tuesday, I publish “Insights” to digest the most relevant startup research & reports, and every Thursday, I publish “Essays” that cover hands-on insights about data-driven innovation & AI in VC. Follow along to understand how startup investing becomes more data-driven, why it matters, and what it means for you.

Current subscribers: 21,715, +210 since last week

Brought to you by VESTBERRY - Portfolio Intelligence Platform for data-driven VCs

Download this free template and create a comprehensive overview of your portfolio directly in Notion.

Our Notion template allows you to consolidate essential portfolio information in a tool many VCs love. Fuel any ad-hoc decision or LP discussion with portfolio data, accessible from anywhere through mobile or desktop.

The AI era requires us to redefine every single aspect of our modern world. About a two months ago, I wrote about “how AI changes the way we interact with data” exploring how AI copilots might change knowledge workflows end2end. This piece triggered a range of inspiring conversations within our DDVC community. One such conversation was with my friend Pietro Casella, MD at EQT and one of the masterminds behind their Motherbrain platform.

We bounced ideas on the future of human-machine interactions and how AI requires a new paradigm of experience design. Today, I’m excited to have Pietro share an incredibly thoughtful guest post on this topic.

Thank you so much for putting your unique insights and visionary ideas into writing for us below!

AI and Our Need for New Interaction Models

When ChatGPT was released, I remember feeling mesmerized by the blinking cursor, the embodiment of one of the most remarkable inventions humans have ever made. For a moment, I suspended disbelief, wondering what was unfolding on the other side. I attempted to anticipate its responses, treating my subsequent questions like a strategic chess game, seeking its limitations.

Yet, there was an elegance in this pulsating square. Like a pause in a conversation, it represented the AI pondering its response. This simple choice was as effective and theatric as Kubrick's use of a red lamp to depict HAL's physical existence.

As the landscape evolved, I reflected on the new shapes for the AI that software creators chose to intuitively convey its power. This involved the search for metaphors, mental models, and representations, as well as concealing its flaws and embracing the exploratory nature of it all. Creators making choices about their desired version of the future, and investors reassessing their beliefs.

As with every software advancement, the prevailing interaction models are yet to be defined. However, it's crucial to understand the impact of these choices, their significance, viability and evolution. It's equally important to observe the evolving scaffolds and foundations around them, to comprehend the path of least resistance - the path that technology will likely follow.

Crafting the AI Experience, beyond User interfaces

Designing the user interaction experience with AI in your application is similar to User Experience design, but with an additional cognitive dimension. Interacting with AI is often like negotiating with a human; you express ideas and anticipate responses.

Your internal dialogue processes this interaction and plans, while your emotional response reacts and guides you. However, it can also be like interacting with a sophisticated machine, mechanical and predictable but doing things previously only expected from humans. You press a button, and it generates a paragraph or performs a task for you.

Designing in this spectrum, the AI interaction experience involves a series of choices. It starts with an innovative concept that encapsulates all your experiences and preferences, or the decision to apply a metaphor you've observed to see if it "fits the bill". Early AI applications focused on conversational UX, an effective metaphor which now seems basic as new approaches are emerging.

I've noticed that while some choices are logical, others are like trying to fit a round peg into a square hole. They lack the appropriate AI-problem fit. Even though they might still be intriguing and exciting, crafting the AI-experience will require more.

A common issue with AI-experience design arises from the gap between the application designer and the user. The designer comprehends the AI's mechanics, while the user might be encountering these features for the first time. The necessary affordances haven't been created yet.

Consequently, users, unaware of the backstory, perceive AI as a new device, influenced only minimally by its stated purpose. They strive to comprehend it and learn how to use it effectively, which requires adjusting their thinking to master its operation.

The classic user experience essentially involves interacting with visual artifacts that encourage engagement. Through this interaction, users try to create a mental model, often drawing comparisons to their previous experiences. The goal is to complete tasks efficiently; the faster the task is completed, the better the user experience.

Mental models represent a user's understanding of how the software functions, its degrees of freedom, and its responses to inputs. When new technology is introduced, it often feels like a complex, puzzle box waiting to be explored. There's a tension between the developer's desire to explore innovative features and the user's need to understand how it works.

What are then examples of emerging mental models in AI applications? which problems do they solve and what do they imply for the future? I will dive into that in the following sections.

Space for Thoughts: Enhancing AI Responsiveness

AI models have latency, meaning the time it takes to perform a task is perceived as slow for most usecases. While great leaps are being made in performance for example with faster machines, faster models or other techniques, there will be plenty of application opportunities to use more AI. Cost will also be a consideration.

So how do we manage wait time in a better way? A few clever UX models are emerging such as the “type” effect in conversational ux as a way to manage expectations or the (is typing…) present in most real time applications.

Another intriguing solution to the waiting time issue is to reveal the inner workings of artificial intelligence (AI). I particularly appreciate the reverse blurring effect introduced by diffusion models, which are now found in some text applications.

Emerging methods for time management may incorporate more use of animation, anthropomorphisms like "thinking utterances" during a conversation (e.g., "Hmm, let me think" statements), or traditional asynchronous design, such as notifications.

A less common method of managing time occurs behind the scenes with optimistic prompting. As a user types a prompt, some applications attempt to predict the sentence and compute a response in advance, creating the perception of an instantaneous answer. This approach is akin to creating an inner voice for the AI, which is a highly relevant solution.

💡 How will this new hybrid UX (synchronous/ asynchronous) evolve? How can it be choreographed with background thinking activities? Will we have a concept of AI initiating interactions with humans? Which technologies better enable this mixture of background and foreground work?

To Reveal or Conceal: the Visibility of AI

In most auto-completion interfaces you interact with an AI, but a rather anonymous one. You perceive it more as a feature of the editor than an intelligent model. This feels quite natural but some designers have chosen to make the AI more explicitly visible, creating an impression of an intelligent agent. In this scenario, it's not the software but rather the bot that is perceived as intelligent. So, the question to consider is whether you want the AI to be visible or not.

Browse.ai introduced their scraping agent as a floating assistant. This design reminds me of the Clippy Microsoft assistant from the early 2000s, which seems like a fitting choice for a webpage scraping helper. The use of this nostalgic UX approach can be quite entertaining.

However, one must consider the extreme case where the implementation of AI becomes forced and artificial. It's akin to the movie "Airplane!" where the autopilot is depicted as an inflatable pilot, rather than a function of the plane itself. A smoother user experience will integrate the AI seamlessly, making it a substantive part of the software, rather than an artificial add-on.

💡 Innovation in user experience design is still in its early stages, particularly when it comes to effectively portraying AI functionality and navigating its limitations. We can expect more creative solutions to emerge, like "presence" indicators, typography, implicit AI, generative UX and more. Designing for latency will enable apps to have more space for thoughts.

Seamless Integration: the Dilemma of How Much to Reveal

The decision of whether to make AI visible is significant. While some developers feel pressured to showcase the sophistication of AI, over time, AI should become more subtle and serve as an unobtrusive building block of the product.

It might make sense to highlight the AI underpinnings if you want to evoke an additional level of awareness, especially when users need to verify the output or when you want to emphasize the "magical" nature of the functionality. However, in many instances, AI is irrelevant to the product's function.

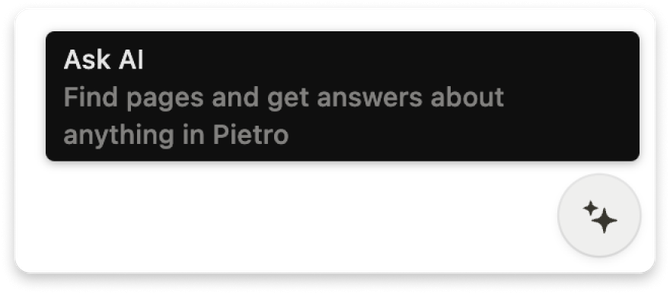

For instance, in Notion, users are made aware of AI's presence; you "Ask AI" to perform tasks for you. This makes sense because it is common for a writer to ask a friend for an opinion about a draft version of an article.

In contrast, Linear's application of AI presents you with Duplicates without disclosing that an AI method is operating behind the scenes. In search use cases, what matters most is obtaining relevant results, regardless of the method used. The careful use of copy - “Possible” duplicates - introduces the right level of awareness to the user.

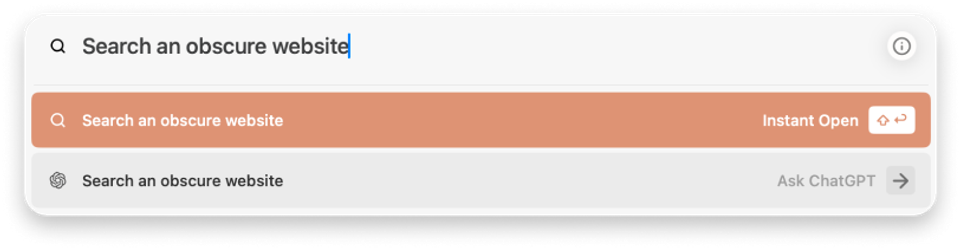

One example I appreciate is the 'Instant Open' feature in the Arc browser. 'Instant Open' demonstrates a behavior known as "agentic." This term describes an intelligent, multi-step process that completes tasks on your behalf. It's important to note that this contrasts with "Ask ChatGPT," where you are explicitly informed about the behavior's inner workings. I admire the attention to detail in their choice of copy, subtly setting users' expectations about what is happening.

💡Mentioning AI is like exposing the engine and only relevant when it fits a purpose. Consider if its relevance extends beyond marketing, but focus as much as possible in making AI part of the flow, like any other piece of the stack

Predicting Thoughts with Auto Completion

Other UX implementations include the typeahead or gray suggestion text, which is accepted by a <tab> command. This option is subtle, yet efficient, and creates a sense of the AI intuitively understanding your intentions, which feels truly magical specially when coding. Its effectively one of the most seamless implementations of AI that exists.

While this device isn't new, there are less common variations of this pattern. One variant offers the user multiple options, while another introduces a more active version with a prompt/response function or explicit actions like summarization buttons. other systems show a pop up with the suggestion allowing you to refine it, sidetracking into a conversational rabbit hole. Striking the correct balance of simplicity and functionality is crucial in this context.

💡 What are other ways to represent the inner thinking of AI? Thinking baloons? 💭 🤔 expressions? indicators? What is the equivalent to auto-completion for more complex workflows, is it descriptive actions? As apps do more behind the scenes, further affordances for parallel thinking will be needed.

Embedding AI Deeply in User Tasks

One of the first embodiments of AI was the copilot, a device where users interact with an artificial agent to discuss a task. While initially used for "side conversations", this approach is progressively being applied directly to tasks. It's particularly useful when the task is essentially the conversation itself, such as in a support use case.

However, in many instances, I consider using a co-pilot a simplistic approach to integrating AI in the product. In most cases it disrupts the flow and hinders the AI agent's understanding of the context of your question.

This happens, for example, when you're coding and need to leave the room to discuss a programming topic, or when you're writing and need to switch to a different user experience, copy some context, and then start the discussion.

A more engaging approach is to present the AI in context as an option to accomplish your task. One of my favorite AI features is hex.tech's in-context assistant that provides a convenient option.

One particularly elegant detail is the "rename with magic" option, which aids in maintaining organization of your project. Again, simple but effective and a more playful product copy.

One interesting detail is the way how hex and also cursor AIs present their suggestions using a the commit color coding affordance from Git, a very familiar UX for developers.

💡With some exceptions, over time, copilots may be perceived as the AI-washed aspect of products where the project manager did not invest sufficient effort in redefining the user experience. It might be beneficial to bring the AI closer to the task in thoughtful and useful ways. What is the closest to the task that you can place you AI interaction?

Balancing AI Guesswork and User Input for Optimal Flow

Some AI systems such as Alexa, Siri or Rabbit operate on the premise that the user should do very little, and the AI will handle everything. They focus on engineering exceptional intention detection for tasks as well as assume AI’s ability to understand systems as they are and is mostly able to figure out how to use them.

Rabbit is an example of a system that is trying to both understand what you want, just like Siri, but also will try to execute things on your behalf. IT does not offer choice, its a black box that you can prompt and ambitions to perform actions using a RPA like interactions behind the scenes.

The approach of making the user “ask” and conversationally clarifying intent can be adequate in certain situations and seems simple but it can be problematic when the user's intent is unclear or if there's insufficient context.

Most customer support agents solve this by offering options once the first interaction is established. Designers should assess if that approach and flexibility is better than offering options upfront.

Another issue is the potential lack of choice or difficulty in discovering features. It's important to remember that AI is a tool we don't fully understand yet, so the perceived lack of choice can be burdensome for users.

One solution to the discoverability issue could be to offer a few options, as seen in ChatGPT. Although it's not desirable to create something as complex as Yahoo (as opposed to Google's minimalist approach), having a starting point can be useful.

A major challenge with the lack of context is its impact on the AI's performance. For instance, if I say "call Pietro," the AI must determine which Pietro I'm referring to. This extra step could decrease performance over time or use up steps in the context window for dereferencing. Could there be a way for the user to express their intentions with minimal effort?

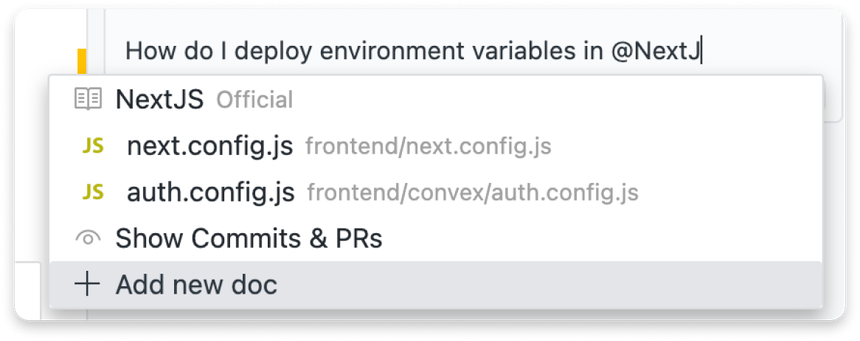

The issue of providing context is addressed through the smart use of affordances like mentions and slash commands. Cursor.so, a popular IDE, has incorporated this in various ways. In the example below, you can see how a specific knowledge base can be targeted with a question.

This ensures that the chatbot uses a pre-indexed data repository or file when crafting its answer. So, when you reference “next", you can specifically inform the chatbot whether you're referring to the NextJS library or a particular file in your system. This proactive clarification helps narrow down the relevant context so that every drop of context is used for actually useful input to the model.

💡 When crafting your user experience, consider whether you'd like the user to believe that the AI comprehends their requests, or if you'd prefer to influence how the user formulates their queries with minimal adaptation to a new or existing affordance. Consider “asking more from the user” upfront to minimize chattiness of the interaction and maximize context creation

Food for Thought: Mastering AI Context Building

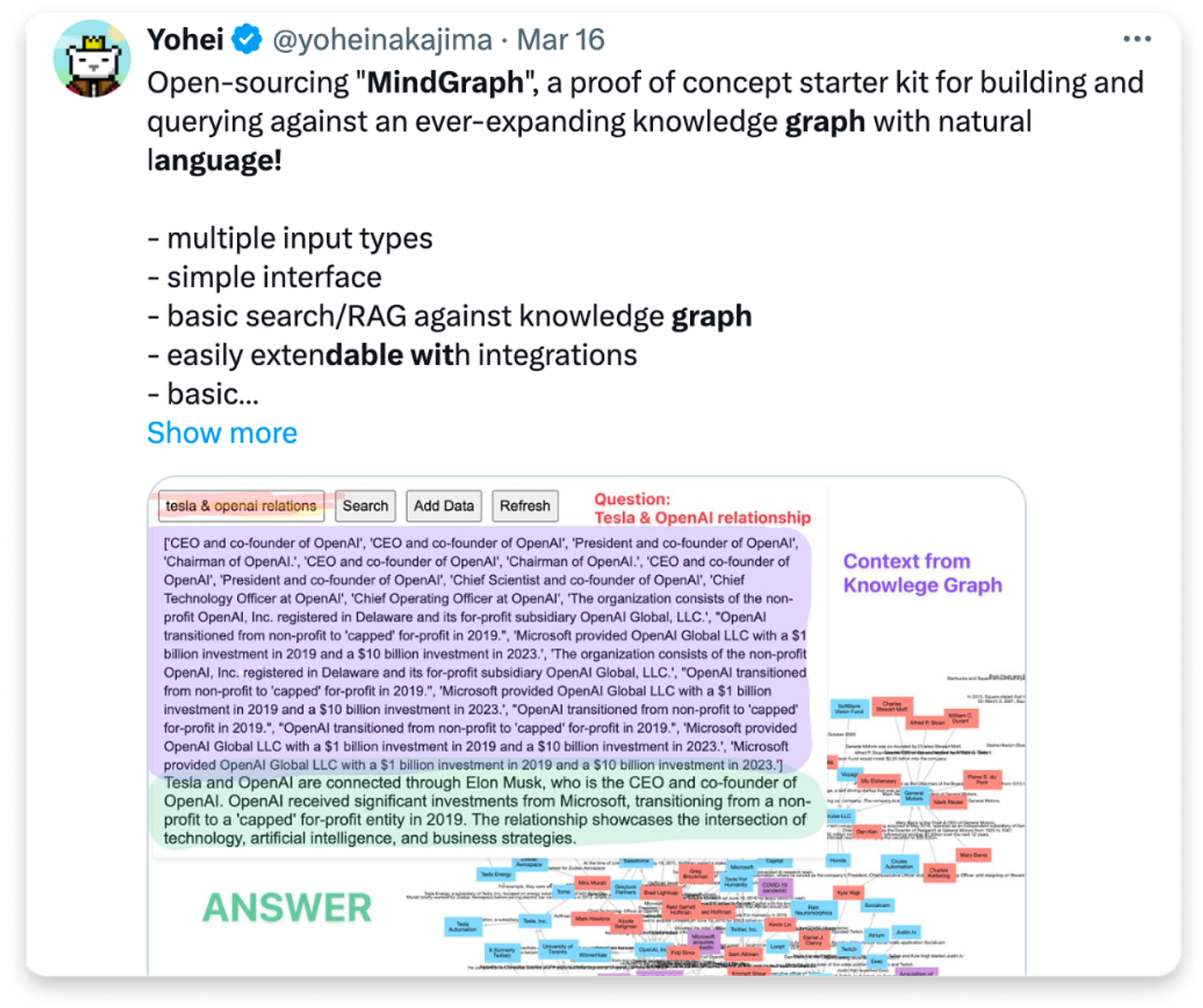

The majority of the effort in building an AI application is dedicated to constructing context. For a while, RAG (Retriever-Augmented Generation) has meant a vector database with an indiscriminate amount of data. However, this approach has led to performance issues due to the complexity of the concept of similarity.

One example of the fragility of semantic similarity-based RAG pertains to the time dimension of knowledge. If a user says, "I don’t like chocolate, because…" in one Slack thread, and "I liked this chocolate" in another, it becomes hard to accurately answer the question "Does the user like chocolate?"

This is due to the simplistic nature of the semantic similarity approach, which doesn't account for the time dimension. The presence of inconsistent information in knowledge bases brings to mind the domain of non-monotonic logics. I predict this field will soon experience a resurgence as another example of revisionist science.

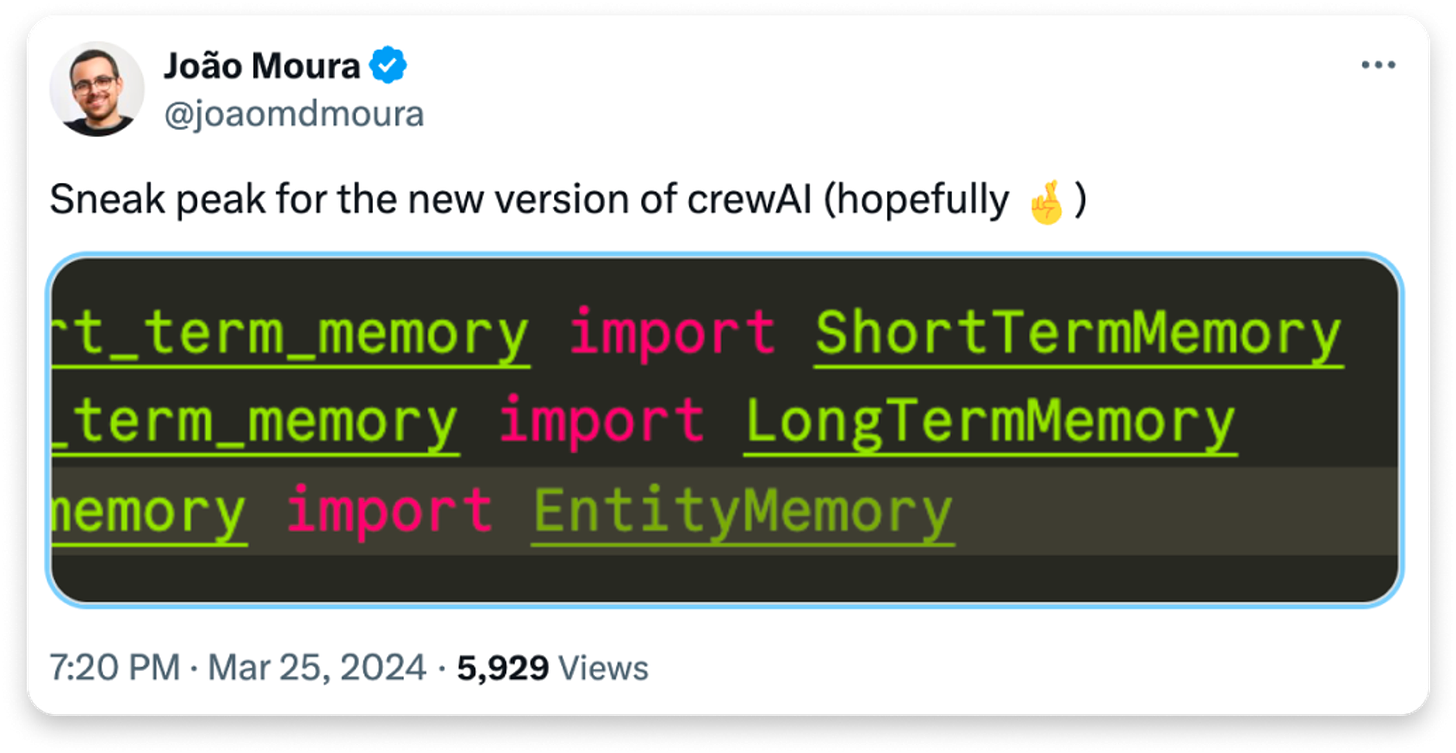

Therefore, new strategies, such as leveraging graph structures, identifying entities, or distinguishing between short and long-term memory, are emerging. The rationale is that if a question pertains to a specific node or concept in your knowledge base, a more nuanced approach than a simple cosine similarity may be more effective.

An intriguing aspect of these emerging patterns is that they are leading to new frameworks that model cognitive processes rather than just data chains. Concepts such as short vs long term memory, or entity vs knowledge, are generally more intuitive to understand than the abstract idea of a vector or a similarity distance threshold.

💡 Crafting the knowledge base of an AI application is a key differentiating factor for future applications. It's essential to experiment with structures and communicate these inner workings in a way that resonates with the user. Investigating human brain-inspired abstractions can trigger significant advancements in the development of intelligent systems.

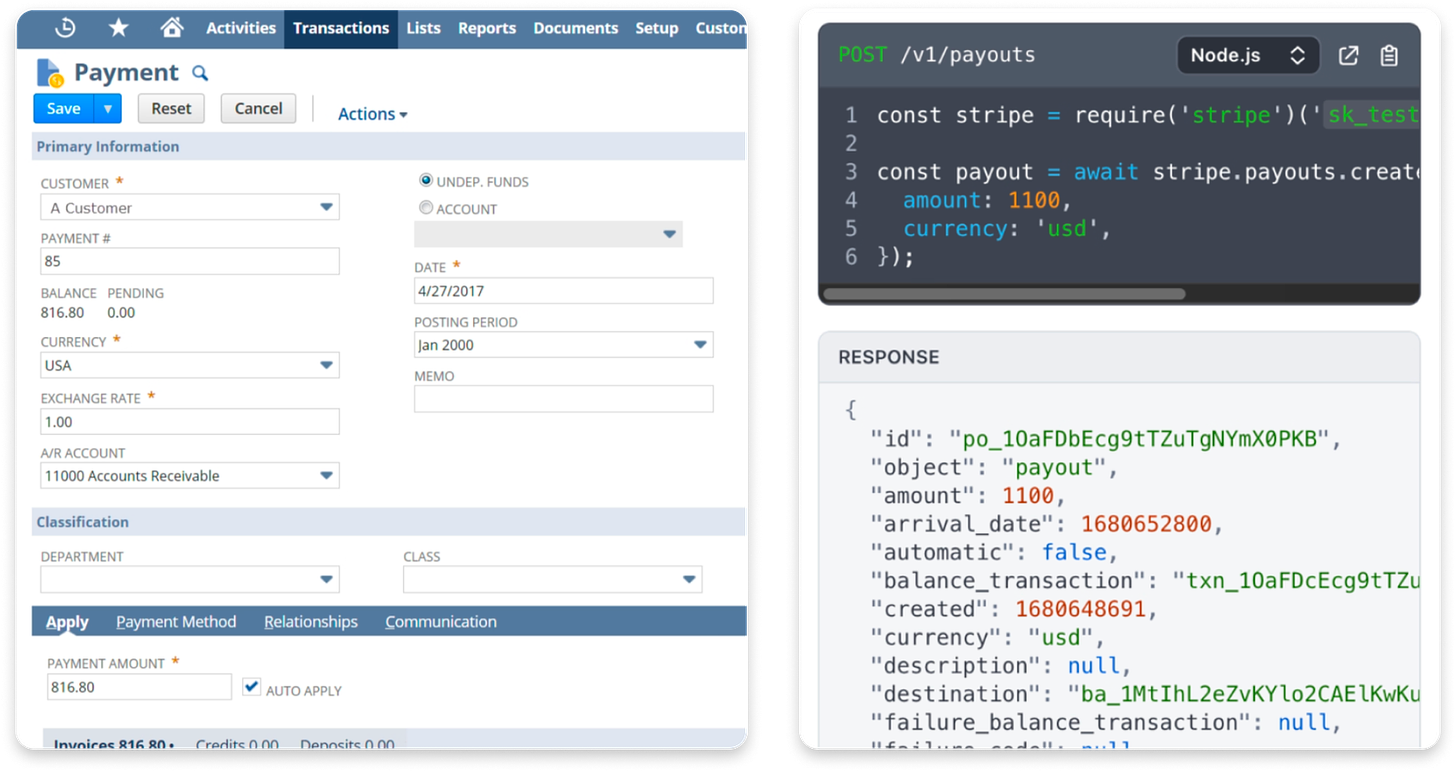

AI Body: Action Surface Design and the Emergence of AI-Centricity in Subsystems

Designers are exploring various methods for interaction with the world. Some favor implementations similar to RPA (Robotic Process Automation). For example, using GPT-4 Vision could enable a bot to analyze a user interface and identify clickable buttons. On the other end, some prefer Function APIs, building AI experiences atop existing API surfaces.

I predict that future applications will mostly be built on top of API surfaces or intermediate layers that expose functionality. Similar to how Robots.txt guides web scrapers, future metadata will likely include declarative instructions on how to interact with the website for machine-to-machine communication.

Further supporting an API-centric, or rather an AI-centric interface (as opposed to User centric) future is the fact that most user interface functionality in business applications serves to prevent human error or add clarification (e.g., the total on an invoice screen). In a future where AI handles most tasks, much of this user interface will become less relevant.

💡When designing your system be very thoughtfull about the Action taking surface direction you choose. How abstract can it be? can you isolate your AI from it as a subsystem? Should you build a new body from scratch instead of leveraging legacy, user-centric technology?

Application Plasticity and the Emergence of Dynamic Software

AI's ability to dynamically invoke functions and write code opens up a range of new applications and ways of thinking about architecture. Unlike today, where developers predefine both the logic and user interface, it's possible to imagine a world where neither of these elements are predetermined.

One recent important innovation in dynamic user experience is Vercel’s Dynamic UI. This feature allows for experiences that go beyond conversation. In this model, developers use the AI’s functional API to ask "how should I invoke this function," and the AI generates the appropriate function parameters. The real beauty of this approach becomes apparent when you understand that React has evolved to treat a visual component as a function - a perfect match for this AI capability.

In its current form, Generative UI requires you to design the UX alternatives that the AI can select from. Essentially, the AI is "only" choosing the correct parameters to pass to the component. However, over time, it's conceivable that the AI could also write the optimal component for a given use case.

This ability also influences business logic and functionality. For instance, GPT's code interpreter tool effectively writes and executes code on the fly when prompted. Most "agent" systems behave similarly. As the performance of models improves, we can expect software dynamic software to become a more common practice.

This is interesting because just like our brains, the software will have more plasticity. The code will adapt dynamically to changes in facts, or API's surfaces, allowing the program to perform more tasks, better tasks and improve its performance over time.

Consider an integration platform instructed to transfer data from system A to system B. If a new field is added to system A, a coder would typically need to update the integration code.

However, in the future, AI could write a new version of the integration code for each execution, effectively propagating any new field across apps without explicit developer intervention. This illustrates how transient software can lead to continuously optimized software.

💡 Crafting experiences with dynamic code and ux is one of the biggest unlocks in application design. Think how you can refine your experiences beyond text for ultimate differentiation and enhancing perceived AI-problem fit.

Agentic Behavior and Beyond

The term Agentic application refers to the use of loops in programming where a program generates a set of instructions in a planning phase and then acts on these instructions, repeating this process until a certain goal is met. Early examples of this pattern include BabyAGI and its subsequent projects, as well as gpt-engineer.

At first, this pattern appeared experimental, with early versions often veering off into irrelevant tangents. However, it's now maturing and being recognized as a tool rather than an end goal. Companies are beginning to use it to build research agents or in advanced search products like Perplexity or Arc Browser, which conduct targeted searches for you. GPTs also exemplify this when they choose to execute actions. Cursor.sh has incorporated this agentic behavior into their chat interface. When it detects a troubleshooting question, it seamlessly reflects on its tasks, formulates plans and actions before executing them.

Currently, the agent paradigm is primarily request-based: you assign a task and the agent executes it. However, an alternative approach is emerging where agents analyze the data being generated and proactively interact with the user. For instance, while typing a text, such an agent may provide suggestions, similar to a peer-reviewer. This new model is beginning to appear in developer tools that proactively analyze your code and provide suggestions, similar to a linter checking that you are following correct conventions but smarter.

💡The Agent abstraction incentivizes prompt specialization and problem decomposition. What are the right thought loops you can add to your products? What is an agent in the context of your application, and what are the personas you want them to incorporate?

Towards a More Cognition-Driven Developer Experience

Many development abstractions today aim to abstract the raw layers of AI. These abstractions are coded as APIs, vector searches, or function calls. However, there's a need for better ways to express behavior using abstractions inspired by the cognitive abilities being modeled. This includes shifting from "read" to "see", "list" to "reason", "search" to "recall", and "write" to "say", "solve", "act". Finding ways to model behavior that are less machine-centric will be crucial in designing future systems.

Furthermore, not only logical intelligence but also emotional intelligence should be leveraged. It's essential to consider how to best persuade or engage in second-order thinking, such as understanding the user's feelings that led to their statement. Hume.ai APIs provide developers with additional signals to understand emotional content and express emotional intent in their statements. How can we utilize this to create better, more useful experiences? Will there be a world where I can dial up or down cognitive characteristics of

💡The AI engineering experience will evolve dramatically in the coming years. Planning your architectures for continuous evolution will be key to retaining a competitive advantage.

Closing Remarks

In drafting this article, I've made observations about my software preferences aiming to construct a language for further discussion on emergent AI experiences. Ultimately, the quality of these experiences hinges not only on AI's sophisticated use but primarily on how well it addresses the problem at hand - a concept I refer to as the "AI problem fit."

The "AI problem fit" is the ultimate measure of an application's preceived AI uniqueness. There are many facets to this, and I plan to elaborate on them in future articles.

It is crucial to note that the aspects mentioned above are not exhaustive, as decisions are made daily. These choices intersect with other emerging affordances and ever more commoditized stacks, like multiplayer capabilities, user experience quality, speed, price, and more. The contemporary AI experience designer should consider how to optimize all these elements collectively.

In essence, it's all about striking a balance and crafting the best possible creation with a profound appreciation for the art. I eagerly anticipate seeing what application designers will come up with. Please let me know about any aspects that you find captivating or that occupy your time in emerging applications.

This is it for today. I hope you enjoyed this thought-provoking guest post and got some inspiration for the future of user experience and software interaction in the intelligent AI era.

Pietro will also be speaking at the virtual Data-Driven VC Summit 2024 5-8th May where he’ll share his hands-on experience from pushing the boundaries at the forefront of AI in VC at EQT.

Stay driven,

Andre

PS: We’ll also have Yohei Nakajima, the inventor of BabyAGI, speak at the Data-driven VC Summit 2024 about “AI Agents: Building a VC Copilot”

Thank you for reading this episode. If you enjoyed it, leave a like or comment, and share it with your friends. If you don’t like it, you can always update your preferences to receive just the regular Thursday “Essays”, just the Tuesday “Insights”, or both. Subscribe below and follow me on LinkedIn or Twitter to never miss data-driven VC updates again.

What do you think about my weekly Newsletter? Love it | It's great | Good | Okay-ish | Stop it

If you have any suggestions, want me to feature an article, research, your tech stack or list a job, hit me up! I would love to include it in my next edition😎