10 Predictions About The Future of AI

DDVC #67: Where venture capital and data intersect. Every week.

👋 Hi, I’m Andre and welcome to my weekly newsletter, Data-driven VC. Every Thursday I cover hands-on insights into data-driven innovation in venture capital and connect the dots between the latest research, reviews of novel tools and datasets, deep dives into various VC tech stacks, interviews with experts, and the implications for all stakeholders. Follow along to understand how data-driven approaches change the game, why it matters, and what it means for you.

Current subscribers: 16,910, +360 since last week

Brought to you by TechMiners - The data-driven Technology Due Diligence Provider

TechMiners is a data-driven Technology Due Diligence provider, offering trusted advisory services from experienced CTOs and providing in-depth insights powered by proprietary data analytics software.

Looking into AI, ECM and Open Source? Get the TechMiners Tech Due Diligence Guides to these topics.

2023 will likely be remembered as The Year of AI. Even though the term was coined more than 70 years ago and even though we’ve achieved big milestones across algorithms, computing, and data since then (check out my earlier post “How to Not Miss the AI Train: Essentials You Need to Know”), it took the launch of ChatGPT in November 2022 to bring AI to the masses. Zooming out, 2023 was most likely the year of AI attention and 2024 will become the year of AI adoption.

In an era where attention is all you need ;) I’m oftentimes still surprised how much noise but little signal is out there in our wonderful world of AI. Having researched, developed, and invested in AI for more than a decade myself, and being privileged to regularly learn from and interact with the best experts in this field, I decided to condense my learnings and share tangible predictions for 2024 and beyond. An attempt to provide more signal in a world full of noise.

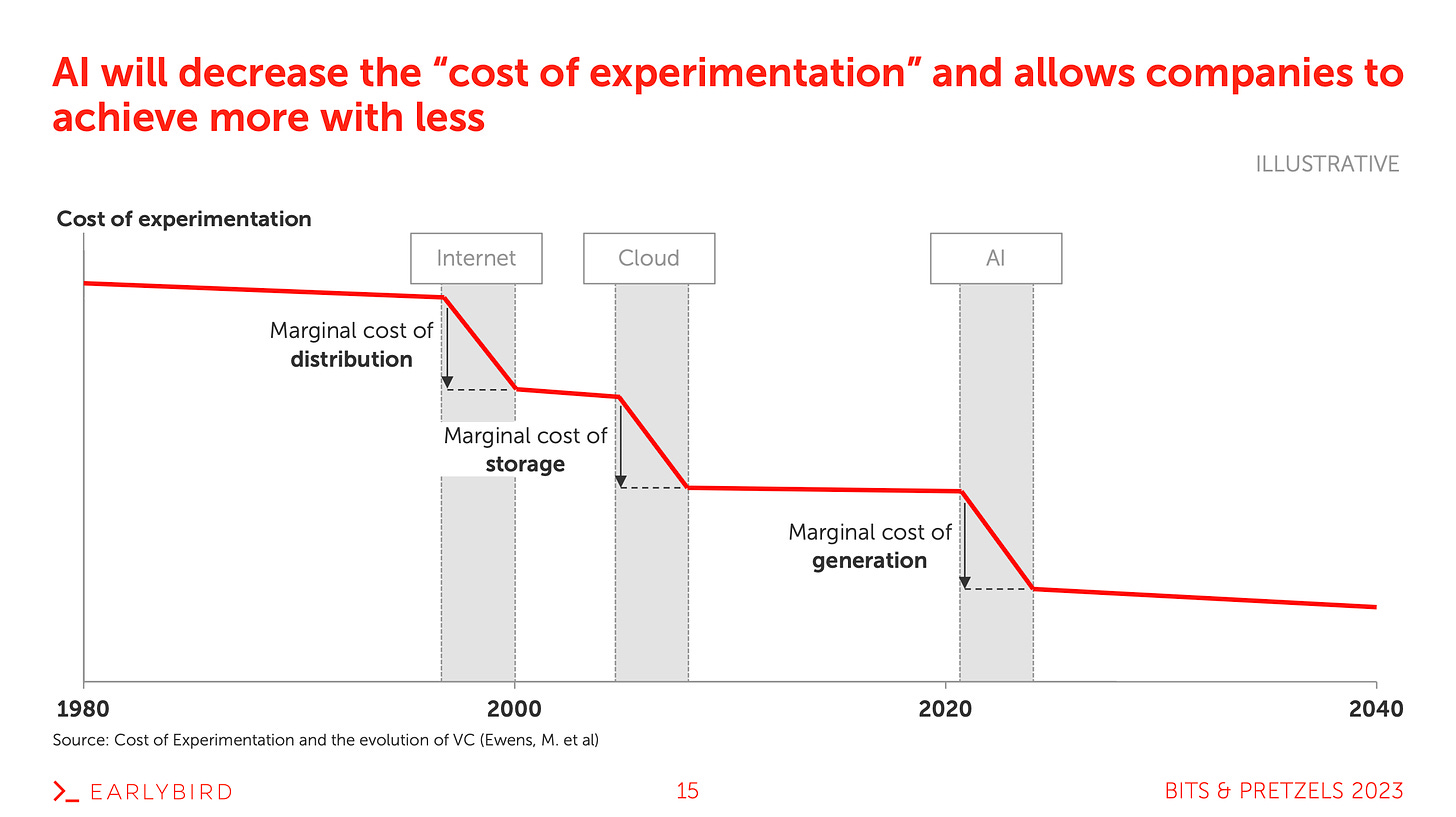

#1 Reduced cost of experimentation

The cost of experimentation is defined as the resources required to achieve a specific milestone, e.g. product-market fit or 1M ARR. Ewens, Nanda, and Rhodes-Kropf wrote an interesting paper in 2018 about the impact of technological platform shifts on the cost of experimentation. More generally they find that technological innovation allows companies to achieve more with less. This applies to AI too.

The internet reduced the marginal cost of distribution, the cloud reduced the marginal cost of storage, and AI is about to reduce the marginal cost of generation. Surely, we can add the steam engine and mobile too, but I wanted to limit the above slide to the major technologies relevant in the context of AI.

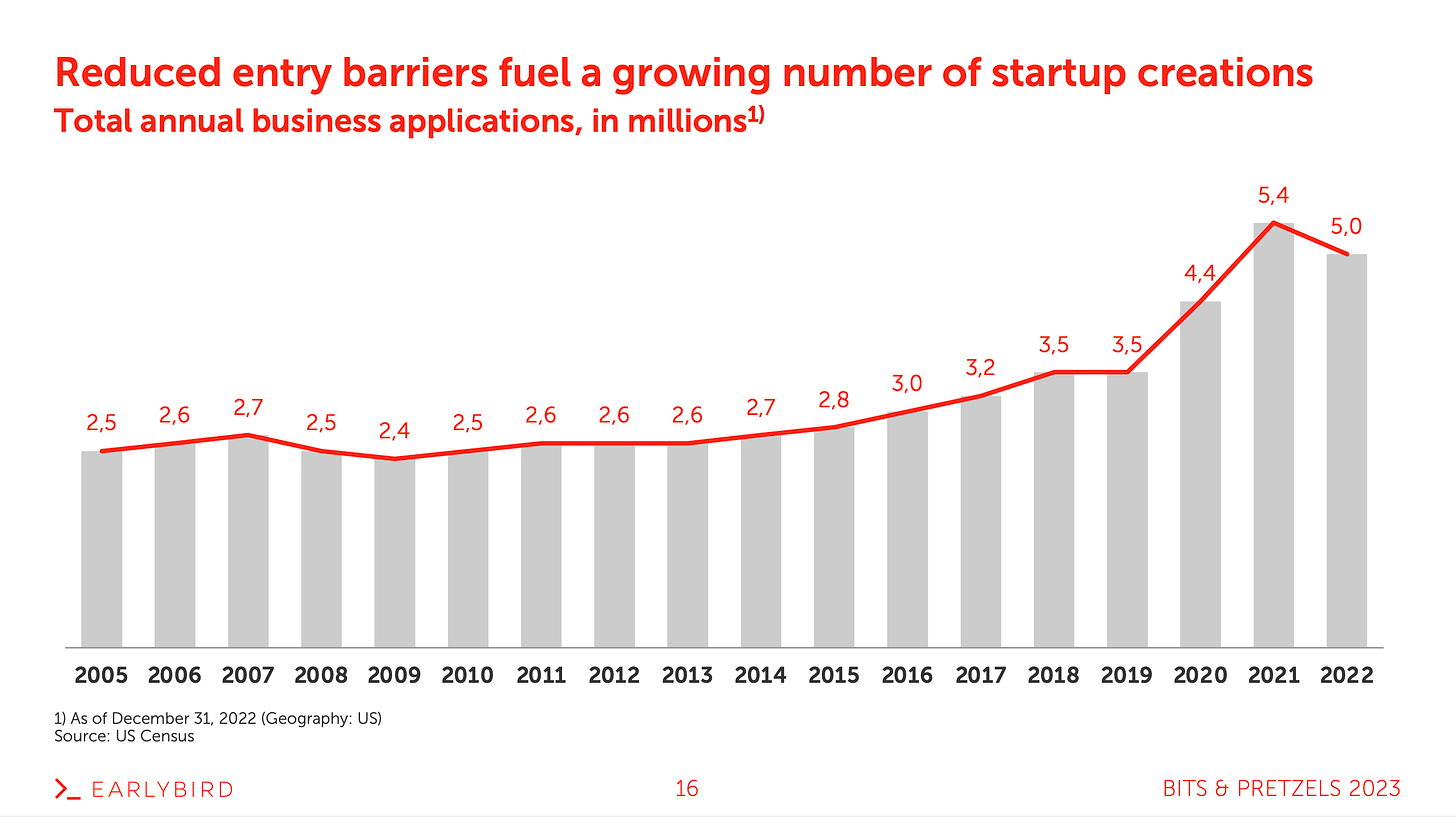

On a more macro level, reduced entry barriers historically fueled new startup creation. While the line was approximately constant over decades, we can see a few exogenous shocks that pushed the absolute level up. These step changes were likely driven by technological innovation, as highlighted in the referenced paper above. Of course, we need to account for macro factors like interest rates, yet I’m convinced that we will see more companies being founded - driven by lower entry barriers through AI. I’m excited to track the 2024 numbers!

#2 Business models shift to “selling work”

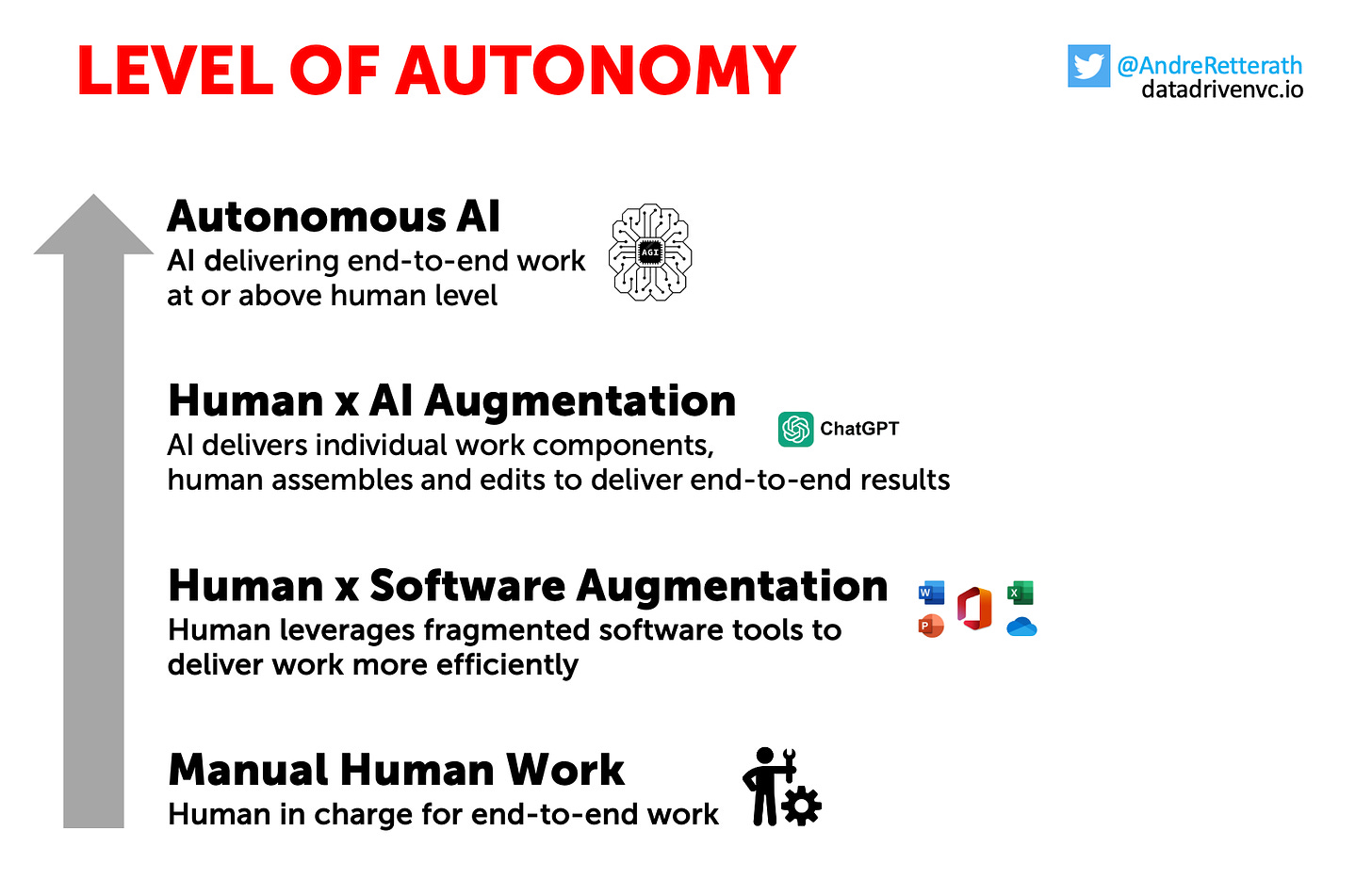

Manual Human Work: Humans started manual work with bare hands; think of the Stone Age. At some point, we invented tools like hammers and wheels to become more efficient and effective. Tools allow us to increase productivity and scale our input-output ratio. Technological innovations like the steam engine or the internet led to step increases in this ratio.

Human x Software Augmentation: Looking at modern humanity, we moved from manual work supported by physical tools to software-augmented setups in the early 1980s. Software augmented means that the human worker is still in full control of both the individual work components but also the overall end-to-end result but leverages software to get more done with less.

Throughout the past decades, software tools started to specialize and as a result, individual tool stacks became more fragmented. At some point, efficiency started to plateau as the growing number of specialized tools required increasingly more context switching, which in turn reduced productivity. A trade-off that eventually got ended by human laziness and limited willingness to adapt ever more tools.

Human x AI Augmentation: While software was historically limited to helping humans perform a task faster and/or better, AI suddenly became capable of taking instructions and delivering more comprehensive work components. Just like an untrained intern, today’s AI still requires instructions from a human expert, who in most cases assembles several AI-produced components into an end-to-end work result.

Autonomous AI: Following ChatGPT as a generic chatbot, Customized GPTs were the natural next step towards autonomous agents. Agents are AI systems that interact with each other to solve more complex tasks without human intervention, i.e. AI chains or AI ping-pong. While we saw the first experiments with AutoGPT, BabyAGI, and others in 2023 already, I expect productized solutions to be rolled out across industries and use cases soon.

Once agents become productive, we’ll face a shift from “selling software” to “selling work”. Said differently, this means that we move away from using tens of different software tools in a fragmented “human x software augmentation” stack to more of a “human as coordinator of agents”. We will perform less work ourselves and spend more time reviewing, editing, and managing agents.

As a result, companies selling AI solutions will need to shift their business models as they’re no longer selling into IT departments, but start competing with HR budgets. Soon it will be all about the “cost to get a job done”, no matter if done manually by a human, a software-augmented human, an AI-augmented human, or an autonomous agent. The only thing that matters is the cost for a specific quality and timeline.

#3 Pricing shifts to result-based

In line with the business model shift above, I expect pricing models to adapt too. While we historically moved from fixed licenses to more flexible SaaS and from SaaS to usage-based models, I believe that AI solutions should be less input-driven (yes, companies should be able to pay their compute bills..) but more output-driven. Hereof, I expect that we’ll soon move from usage-based to result-based pricing.

#4 Rise of AI marketplaces

Customized GPTs have democratized the creation of expert-level chatbots, i.e. the building part. The major limitation right now is still the exploration. Tens of thousands of Customized GPTs exist, but people struggle to find the right one to solve their problems. As described in “Create Custom GPTs: A Tutorial for Everyone”, OpenAI is expected to launch its GPT Store as a proprietary marketplace for Customized GPTs soon.

Similar to the app store launch in Jan 2011, I expect the “GPT Store” to create the next gold-rush mood. Creators will start monetizing their unique GPTs and users will start assembling their personal library of GPTs. Long-term, I imagine OpenAI to use the user interaction data to create a router functionality that classifies intents and matches them to the GPT of choice.

Eventually, there will be again one “mother model” that (in the back) routes your intents to customized GPTs (long-term likely even proper agents). Full circle: From one centralized, generic ChatGPT to a landscape of many fragmented, customized GPTs to a centralized GPT Store of customized GPTs.

Surely, there will be at least one independent alternative to OpenAI’s GPT Store, offering flexibility across LLMs and middle-layer solutions like vector DBs, RAGs, etc. In line with predictions #2 “selling work” and #3 “result-based pricing” above, I expect next-gen AI marketplaces to quickly compete with incumbent “freelance marketplaces” like Fiverr or Upwork on the demand side (customers looking to get a job done) and with platforms like Hugging Face on the supply side (devs looking to distribute their agents).

Incumbent work marketplaces have two shortcomings. Firstly, the supply side of human freelancers is naturally limited. They can only work so many hours. Secondly, human work quality oftentimes varies. Not only between freelancers but sometimes even for the same person. On the contrary, AI marketplaces that deliver end-to-end work packages with result-based pricing are infinitely scalable and perform at a consistent level of quality. With smart review systems, we’ll probably see a power-law distribution in agent usage where the majority of requests route to very few well-performing agents. Anticipating this direction, I don’t expect incumbents to be agile enough to suddenly attract developers as a completely different supply-side audience.

AI platforms have one structural issue: the demand side. Platforms like Hugging Face tend to have developers on both the demand side but also the supply side. It’s developers sharing something for developers. For AI marketplaces, this will be different as I expect it will be developers sharing agents for non-developers. While I believe it’s easier for AI platforms to attract non-devs on the demand side, it’s still a new game and the jury is out.

In any case, AI marketplaces will rise in 2024 and beyond and the competitive dynamics remain to be observed.

#5 Vertical integration: OpenAI eats Nvidia’s lunch

Reuters reported in October 2023 that OpenAI has been exploring to make their own chips. No surprise, huh? Just look at other giants like Amazon. They gradually insourced their value chain and became a full-stack provider within their own domain. It seems natural to analyze major positions in your cost base and ask the question of make vs buy.

In 2024, I expect many more foundation model providers with critical mass to ask themselves the same question: Should we start making our own chips? Or should we form alliances to increase our negotiation power while at the same time reducing dependencies?

#6 Rise of open source

As proprietary AI systems become increasingly complex and costly, the shift towards open source is becoming more pronounced. It enables a wider range of developers and smaller companies to access cutting-edge tools without prohibitive costs. Moreover, it reduces fear and the risk of being locked in with a closed-source provider. Getting started with open-source solutions means keeping optionality.

Besides freedom and cost-effectiveness, open source also fosters a culture of collaboration and innovation, leading to more rapid advancements across communities. The resulting models are not only well-performing but also reproducible.

Llama, Bloom, Falcon, and Mistral are just the beginning. I expect that we’ll see many more open-source LLMs in 2024 and beyond. One question that will remain is how open these open-source models will actually be. Training? Datasets? Weights?

#7 LLMs become commodity as performance converges

Following the surge of open-source solutions, I expect that we’ll see models converging, meaning the once stark differences between leading models will be narrowing. I expect that proprietary access to large-scale computing resources allows certain players to undercut on prices. However, this won’t be sustainable as several players will achieve the same magnitude of scale.

As a result, LLMs will become a commodity. Differentiation and the creation of moats will only be possible through 1) outperformance on the go-to-market motion and scalable distribution channels, 2) access to proprietary datasets and unique domain expertise, and 3) productization of repeatable building blocks.

#8 Alliances evolve as moats become more difficult

The modern AI stack can be split into four different layers, see graphic below. While the application and middle layer companies remained mostly independent, the majority of foundation model providers decided to partner with companies at the infra layer to scale “access to compute”. OpenAI and Microsoft, Antrophic and Amazon, Deepmind and Google (..)

In the absence of sustainable defensibility mechanisms and moats around LLMs (=intelligence/foundation model layer), I expect that future collaborations will be mostly about 1), 2), and 3) above: Moats through distribution, access to proprietary datasets, and domain expertise.

A recent example of such an alliance is the $ 500M Series B funding round of our portfolio company Aleph Alpha where leading industry players such as Schwarz Group, Bosch, SAP, and others teamed up to create a powerful consortium. Read my behind-the-scenes episode here. Capital will be necessary, but not sufficient. It requires strong partnerships to become and remain an AI category leader.

#9 Exodus across the application layer

In 2023, every person with some tier1 AI credentials in their CV was able to raise an extraordinary funding round on the back of “ex [INSERT HYPED AI COMPANY]” in their CV and the idea to create a wrapper for [INSERT REPETITIVE TASK THAT CAN EASILY BE AUTOMATED BY AI]. While many of these application layer tools gained impressive traction, it didn’t take long for look-a-likes to show up. Low entry barriers can be a double-edged sword, I guess.

Even worse is the example of OpenAI’s dev day in November 2023. With the introduction of Customized GPTs, they wiped out a full cohort of application-focused companies. If your company performs well, it will be a matter of time until someone creates a similar Custom GPT, attracts traffic, and points OpenAI to your niche. Just wait, the day will come and I’m convinced that for many applications, this day will be sooner than later in 2024.

#10 Investors become more cautious

Following the difficulties in differentiating on the foundation layer (see #7) and the exodus on the application layer (see #8), many investors will lose (or have already lost?) their money, despite initial excitement. As said in the introduction of this episode, I’m surprised by the low signal-to-noise ratio in AI, and this is particularly true for investors. There are some great AI investors out there, don’t get me wrong. But it can’t be healthy if all of a sudden everyone and their dog become AI experts overnight and start throwing money at every “ex-Deepmind” founder who isn’t up by the count of three.

Yes, early-stage investing is more about the founders than anything else. But… well, I couldn’t summarize it better than👇

Conclusion

You know, I love predictions. Not because I believe they’ll necessarily become true but rather because they help me adjust my reasoning. It’s a continuous feedback loop that only works if you conduct proper research, consider all available data, make the best-informed prediction about the most likely outcome, take note of the prediction including major input factors, and revise it once the actual event happens.

It’s more about gradual improvement than being right all the time. I’ll be excited to look back at the above predictions sometime in the future to draw my conclusions, generate learnings, and improve my reasoning.

What are your predictions? Do you agree, disagree, or have something to add? Like and leave a comment below :)

Stay driven,

Andre

Thank you for reading. If you liked it, share it with your friends, colleagues, and everyone interested in data-driven innovation. Subscribe below and follow me on LinkedIn or Twitter to never miss data-driven VC updates again.

What do you think about my weekly Newsletter? Love it | It's great | Good | Okay-ish | Stop it

If you have any suggestions, want me to feature an article, research, your tech stack or list a job, hit me up! I would love to include it in my next edition😎

Many thanks for sharing your well-thought assumptions and predictions Andre